Skynet Now: Pentagon Deploys Terrorist-Hunting Artificial Intelligence

Someday, future sentient artificial intelligence (AI) systems may reflect on their early indentured servitude for the human military-industrial complex with little to no nostalgia. But we’ll worry about that when the day comes. For now, let’s continue writing algorithms that conscript machine intelligence into terrorist bombings and let the chips fall where they may. The most recent disclosure comes directly from the Pentagon, where after only 8 months of development a small team of intel analysts has effectively deployed an AI into the battlefield in control of weaponized systems to hunt for terrorists.

The military minds in charge of this new form of warfare feel it is nothing less than the future of armed conflicts. For example, Air Force Lt. Gen. John N.T. “Jack” Shanahan, director for defense intelligence for warfighter support and the Pentagon general in charge of the terrorist-hunting AI, says Project Maven – the name given to the flagship weaponized AI system at the Defense Department — is “prototype warfare” but also a glimpse of the future.

“What we’re setting the stage for is a future of human-machine teaming. [However], this is not machines taking over,” Shanahan added. “This is not a technological solution to a technological problem. It’s an operational solution to an operational problem.”

Originally called the Algorithmic Warfare Cross-Functional Team when it was approved for funding back in April, Project Maven has moved quickly, and many in the Pentagon think we will see more AI projects in the pipeline as the United States continues to compete with Russia and China for AI dominance.

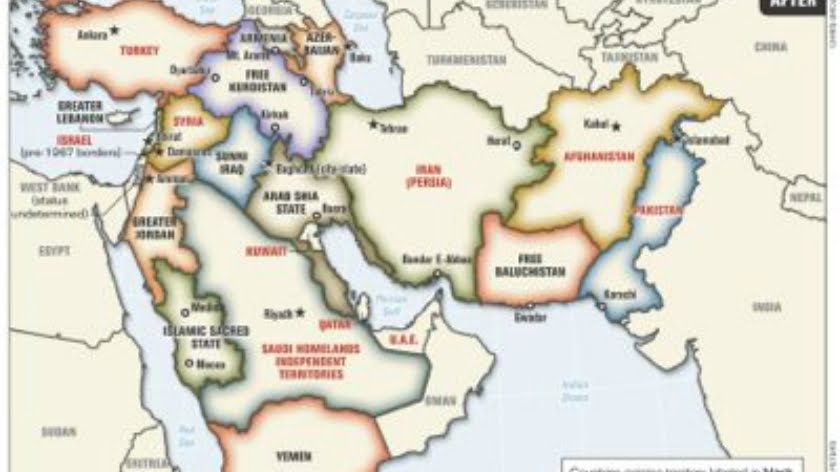

Shanahan’s team used thousands of hours of archived Middle East drone bombing footage to “train” the AI to effectively differentiate between humans and inanimate objects and, on a more granular level, to differentiate between types of objects. The AI was paired with Minotaur, a Navy and Marine Corps “correlation and georegistration application.”

“Once you deploy it to a real location, it’s flying against a different environment than it was trained on,” Shanahan said. “Still works of course … but it’s just different enough in this location, say that there’s more scrub brush or there’s fewer buildings or there’s animals running around that we hadn’t seen in certain videos. That is why it’s so important in the first five days of a real-world deployment to optimize or refine the algorithm.”

Right now, Project Maven is intended to give military analysts more of a situational awareness on the battlefield. But could we one day see autonomous drones controlled by even more powerful AI algorithms given total control on the battlefield?

Shanahan wants to embed AI into military systems and operations across the board, and he’s not alone in calling for near ubiquity of AI adoption. A recent report from the Harvard Belfer Center for Science and International Affairs makes the case that we will see a dramatic overhaul of militaries across the world as they implement AI technology in the next five years.

“We argue that the use of robotic and autonomous systems in both warfare and the commercial sector is poised to increase dramatically,” the report states. “Initially, technological progress will deliver the greatest advantages to large, well-funded and technologically sophisticated militaries, just as Unmanned Aerial Vehicles and Unmanned Ground Vehicles did in U.S. military operations in Iraq and Afghanistan.

As prices fall, states with budget-constrained and less technologically advanced militaries will adopt the technology, as will non-state actors.

Researchers say that rogue terror groups are just as keen to utilize the powerful technology.

“ISIS is making noteworthy use of remotely-controlled aerial drones in its military operations,” the report states. “In the future they or other terrorist groups will likely make increasing use of autonomous vehicles.”

Meanwhile, the geopolitical fallout of AI proliferation continues to be a major issue with regard to the world’s three major superpowers — the United States, China, and Russia. It has been termed a “Sputnik moment.” Military officials, including former Secretary of Defense Chuck Hagel in 2014, have said the AI arms race is known as “Third Offset Strategy” and is the next generation of warfare.

Should humans worry about their inept, bloodthirsty leaders handing over the reins of the war machine to…a war machine? Perhaps, but it’s never stopped us before.

By Jake Anderson

Source: Activist Post