China’s “Social Credit” System: Dystopia by Design or Successful Social Engineering?

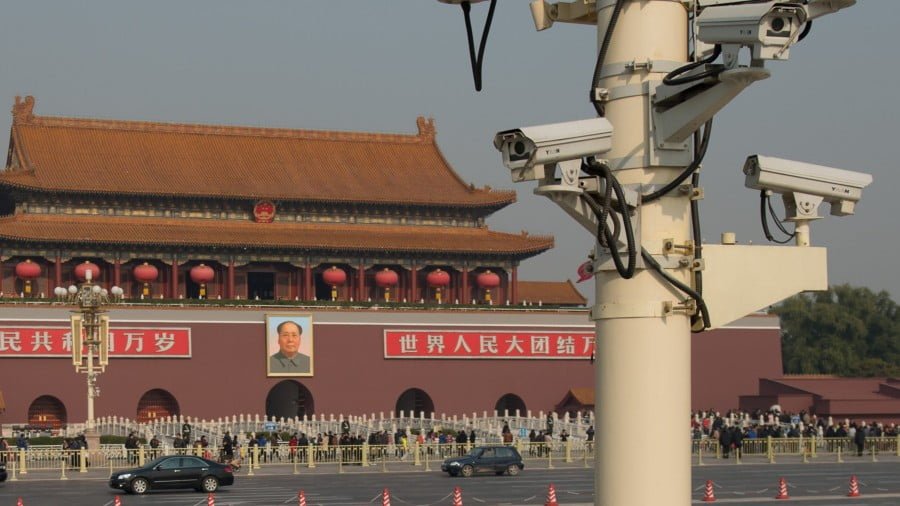

The Beijing municipal government recently unveiled its plan to implement China’s controversial “social credit” system by 2020.

Reuters cited Xinhua as reporting that the capital city will pioneer this interconnected social engineering and law enforcement program. For those who haven’t been following it, China wants to roll out a nationwide video surveillance system that links to its already active digital one in order to allocate so-called “personal trustworthiness points” to every citizen and company, which would incentivize them with privileges for proper behavior while penalizing them for violating socio-legal standards. The “social credit” system has allegedly been tested in Xinjiang as part of the central government’s anti-terrorist strategy there and elements of it are reportedly being exported to Venezuela according to a different Reuters piece released over the weekend.

Western voices have criticized the program for bringing to life George Orwell’s dystopian 1984 surveillance state, warning that the Chinese’s personal freedom will be at risk, as will those of any country’s citizens that Beijing exports this technology to. That, however, ignores the fact that there are many different societal models in the world that don’t organize themselves around the Western one, such as China’s. As an admittedly over-simplistic contrast, China places the state over the citizenry and the collective over the individual, while the West (whether sincerely or not) purports that it practices the opposite model by placing the citizenry over the state and the individual over the collective.

The game-changer, however, is that China’s “social credit” system will introduce so-called “algorithmic governance” that sees complex AI programs replacing human decision makers when it comes to enforcing the country’s socio-legal standards. This necessitates that that the government eventually obtains full video and digital control over every aspect of the country and integrates these two systems into a holistic one that also doles out the privileges and penalties associated with citizen and company behavior in real time. Accordingly, this massive endeavor also requires gargantuan amounts of electricity to power the computer processors responsible for running this system and retaining all its data, meaning that it’s probably still many years away from nationwide implementation.

Even so, considering that Beijing will soon be pioneering this project in its publicly unveiled form, a few important points deserve to be pondered. It’s unclear at this moment whether subjects will be allowed to freely access their “social credit” file and appeal what they may claim to be unfair penalties inflicted upon them, which also brings into question exactly how long video and digital data will be saved. Another issue is whether the state will selectively release people’s information into the public domain in order to shame them and reinforce society’s overall adherence to the system’s standards. Depending on what’s publicly disclosed, this could have serious psychological consequences for the alleged violator.

On top of that, nobody knows how the algorithm works and whether certain socio-legal infractions can be expunged after some time and/or through various “rehabilitation programs”, meaning that there’s the possibility that youthful non-criminal recklessness and irresponsibility could end up ruining someone’s life. Relatedly, the “social credit” system’s scoring is decontextualized from its subjects’ lives, so a violator’s abusive relationship for example wouldn’t be factored into softening any punishment meted out to them if their socio-legal infringement was somehow influenced by it, such as if a woman slapped her verbally abusive partner in public. This could prove to be troublesome in countless cases, but it remains incumbent on the Chinese themselves to work out any possible solutions to it.

Lastly, it’s unknown whether the “social credit” system will be applied to government and military officials or whether they’ll be a “class among themselves” whose actions are judged by human beings and not AI. The argument can be made that their inclusion in the system could be abused for political purposes if a human overseer or “watchman” is corrupt, but it could also hold them accountable for any crimes that they commit and therefore increase the public’s trust in the Communist Party. It’ll remain to be seen whether these questions and the many more surrounding China’s “social credit” system are answered in the coming future, but what’s known for sure is that the world’s largest country is entering an unprecedented era of “algorithmic governance”.

By Andrew Korybko

Source: Oriental Review